[Presentation link]

If you want to know what’s happening inside an open-source LLM, you must first grapple with a four-dimensional grid of activations spread across layers, positions, heads, and hidden dimensions. The mechanistic interpretability community has invented a set of methods for this such as logit lenses, future lenses, activation patching and attention-head probing, with each typically available across separate codebases, with potentially subtly different implementation details.

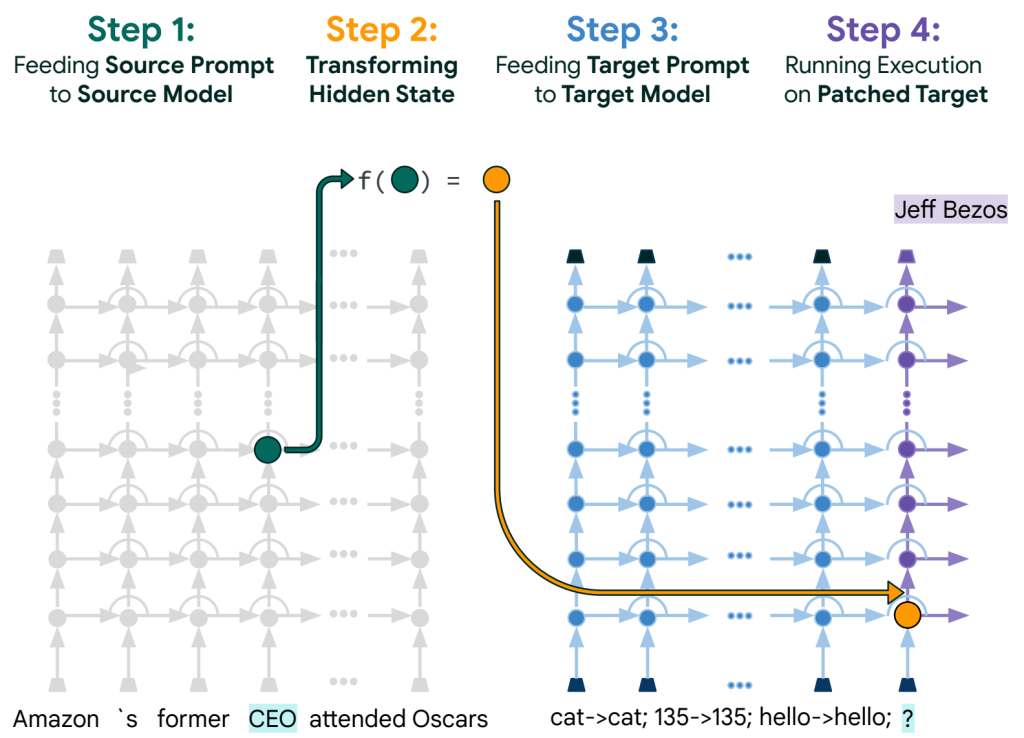

In January 2024, Google PAIR’s Patchscopes paper made a nice observation: every one of these methods is the same operation with different parameters. You take a hidden state from a source forward pass and patch it (possibly via some mapping) into a target forward pass. The logit lens is one choice of those tuples, token identity is another, cross-model patching is another. Once you stare at it, every “new” lens is just another point in a 5-tuple parameter space, and the case for each being implemented separately reduces.

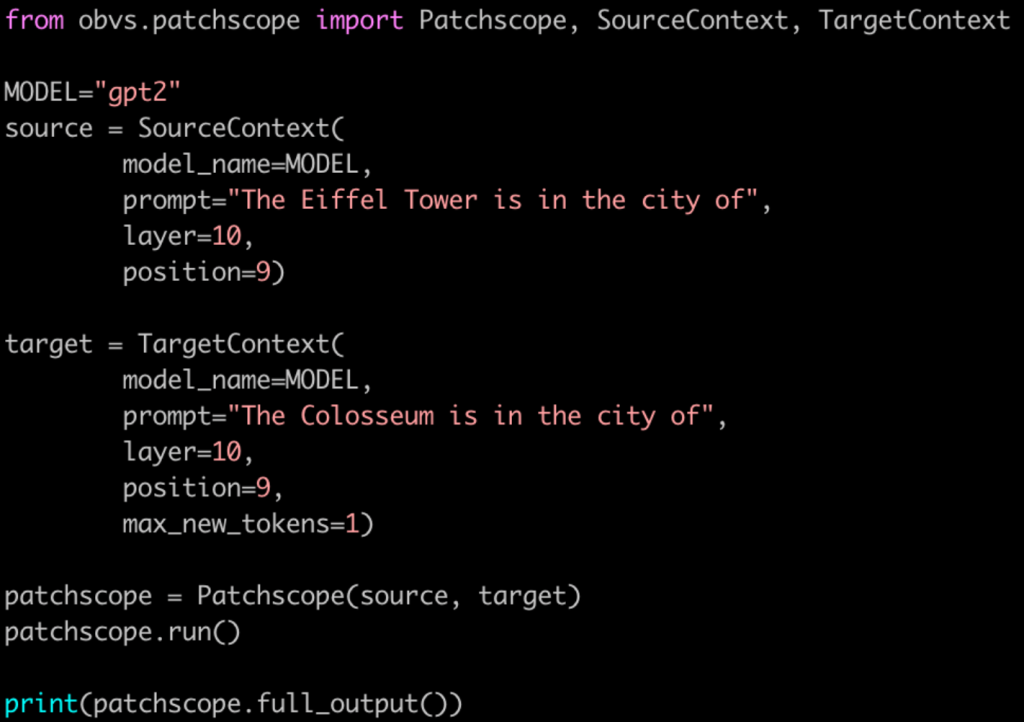

obvs is the library my AI Safety Camp team wrote (GitHub, PyPi) to provide an implementation of the methods falling under this abstraction to mechanistic interpretability researchers. Much of the library is essentially three objects: a SourceContext and a TargetContext that mirror the paper’s tuples one-for-one, and a Patchscope that, given the preceding two objects, handles the tracing forward passes, the residual-stream intervention, and the generation that follows, all on top of nnsight. Because every lens is now the same object with different parameters, experimentation moves closer to playing around with configuration files, rather than battling with implementation details. Loop over layers to watch the model’s answer take shape, swap the target prompt to ask the model to describe its own hidden state in plain English, or patch into a bigger LLM to get a richer description. A small attempt to make a few mechanistic interpretability methods more accessible to researchers.